Software security vendors are applying Generative AI to systems that suggest or apply remediations for software vulnerabilities. This tech is giving security teams the first realistic options for managing security debt at scale while showing developers the future they were promised; where work is targeted at creating user value instead of looping back to old code that generates new work. However, there are certain concerns with the risks of utilizing Generative AI for augmenting vulnerability remediation. Let’s explore this rapidly evolving landscape and how you can reap the benefits without incurring the risks.

What Risks Generative AI Augmented Vulnerability Remediation Solutions Could Pose

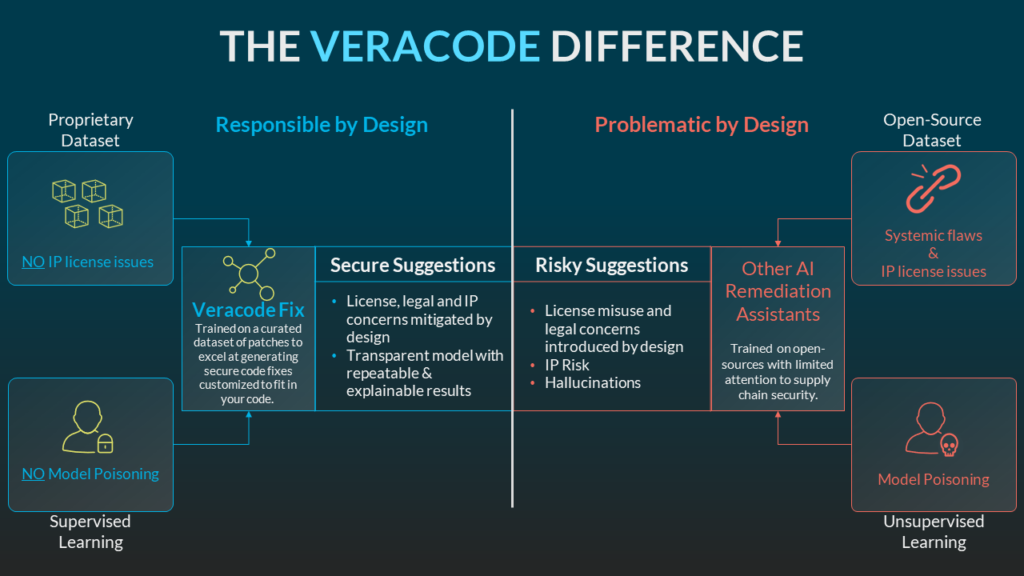

Legal challenges to data sourcing expose the risk of training Generative AI models on all code. Unfortunately, that hasn’t stopped many vendors from taking the position that a model trained on open-source code is sufficiently safe from IP and code provenance concerns.

Not all code is equally secure, nor are all licenses the same. Veracode’s State of Software Security 2023 report shows that over 74% of applications contain at least one security flaw. Open-source libraries are just as likely to have bad habits as any other generic dataset. Flaw prevalence in open-source libraries varies by language, but Veracode’s research revealed over 70% of applications had a flaw in an open-source library on first scan.

Additionally, not all Open-Source licenses are the same. Nor do most Generative AI vendors have a good answer for how they mitigate the risk of IP exposure or attribute and compensate contributors when their IP is used to generate code for another application.

In addition to questions around output quality, and whether it is legal to use the outputs of generative AI, the attack vectors around Generative AI are difficult to guard against programmatically.

Almost at once (and continuing today) we’ve seen examples of users working around Generative AI guardrails through creative prompt engineering. These Prompt Insertion attacks are difficult to prevent because the models must operate around loosely held guidelines for behavior to be as powerful as they are. The experts at Veracode stepped up to tackle these concerns, and here’s what we found.

How to Reap the Benefits of Generative AI Augmented Vulnerability Remediation Without Incurring the Risks

With Veracode Fix, you can reap the benefits of Generative AI augmented vulnerability remediation without incurring the risks. Veracode Fix is responsible by design with automated, replicable prompt generation that means our output is guaranteed to be what we (and our customers) expect it to be. Veracode Fix has taken a different approach by utilizing a GPT tuned on a curated dataset that does not hold, or keep:

- Code in-the-wild

- Open-Source Code

- Customer data

- Customer code

Model Poisoning is reliant on a model being trained through methods exposing it to open-source, or widely available code. When a model seeks input wherever it’s available, anyone can place malicious code in those locations so the model inherits malicious behavior.

Veracode Fix avoids this by using a well-studied model that’s been trained for years in closed systems with tight controls. Our model is regularly re-trained, reviewed, and updated to do exactly what our customers need it to do.

Critically, Veracode is not trying to fix everything because that would ignore the risks and realities of these technologies. Over several years, and through deep human expertise, Veracode has developed a set of reference patches. Along with the data path provided by Veracode scan results, the Generative AI backend selects from those patches and suggests fixes to known vulnerabilities that are then customized to fit within customer code.

Veracode is innovating at the edge of what’s possible with Generative AI technology. But we are insistent on its practical application and respect the risks introduced at least as much as we’re excited about their transformative impact.

Each suggestion Veracode Fix provides leverages thousands of hours of human knowledge applied and customized by a Generative AI system that is responsible by design to avoid emerging threats. Veracode Fix is delivering meaningful value without caveats about our dataset or training methodologies.

Experience responsible by design AI in practice. Schedule a demo of Veracode Fix today.