The State of Software Security Today

We’re living in an era where business competitiveness hinges on the speed and quality of software delivery. Some enterprises are struggling to keep up. Others are thriving.

Wherever they fall on that spectrum, all organizations race the competitive clock to deploy and evolve their game-changing applications. The question is, how well does application security keep up with it all? This is the fundamental question Veracode asked this year as our team examined the data for State of Software Security (SOSS) Volume 9.

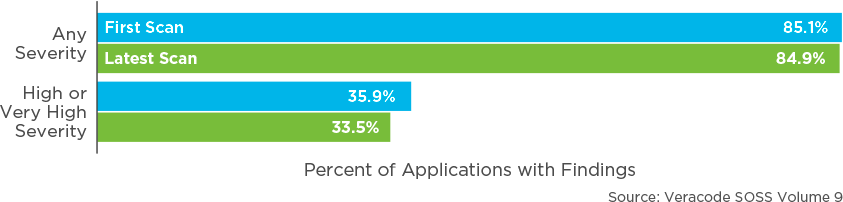

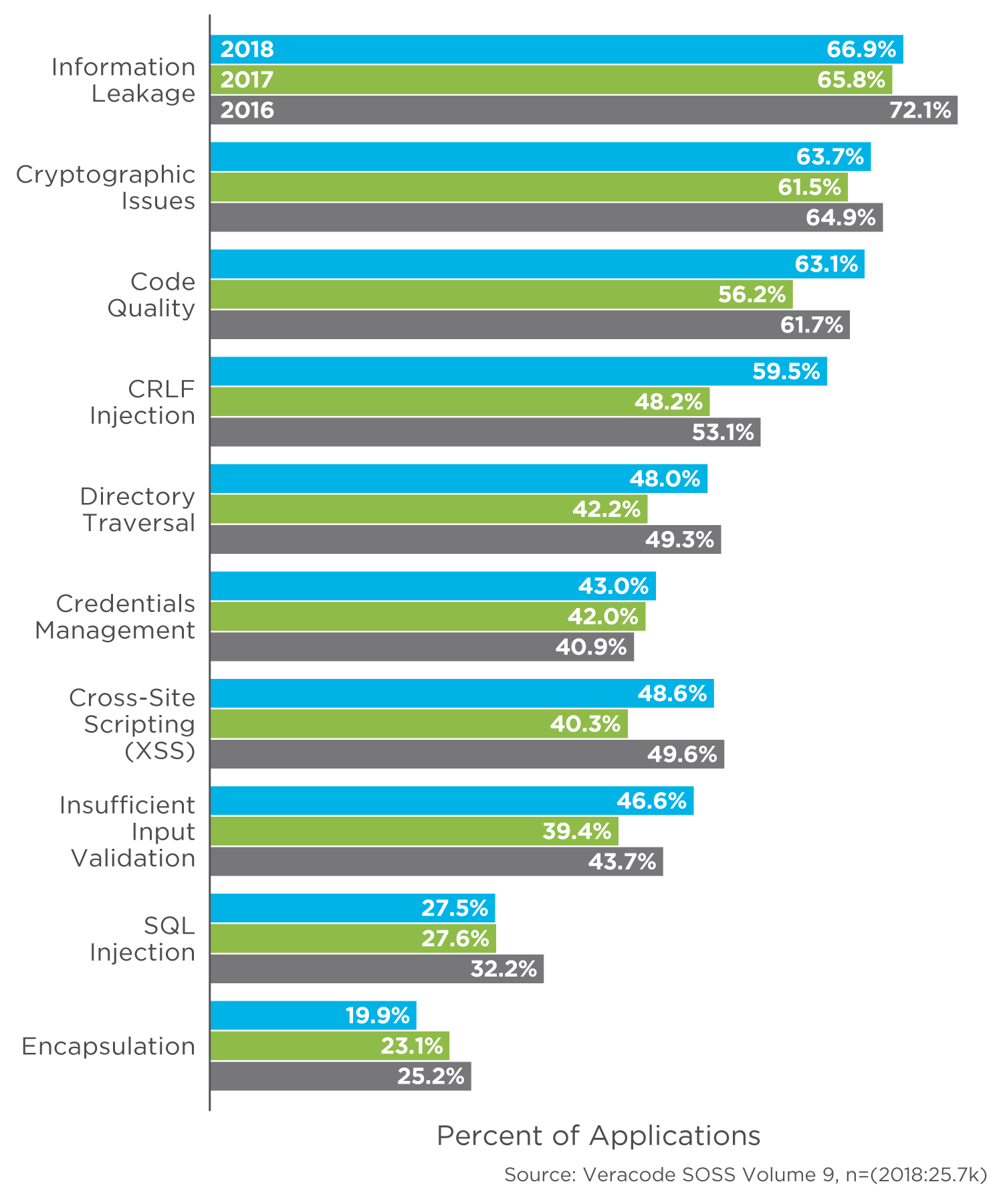

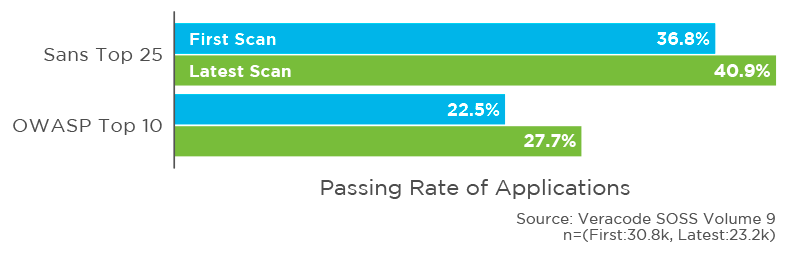

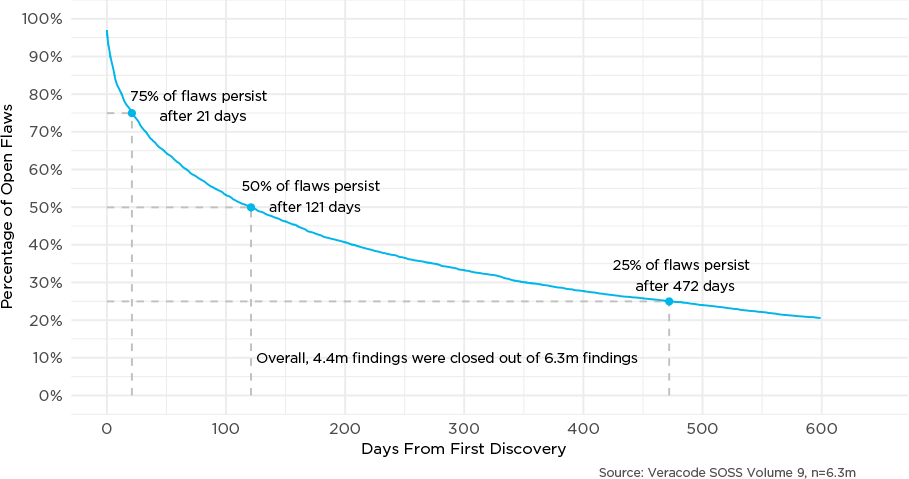

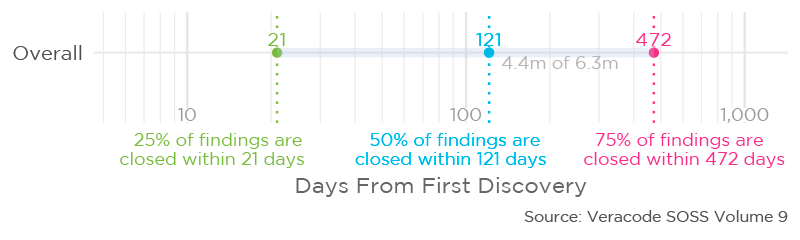

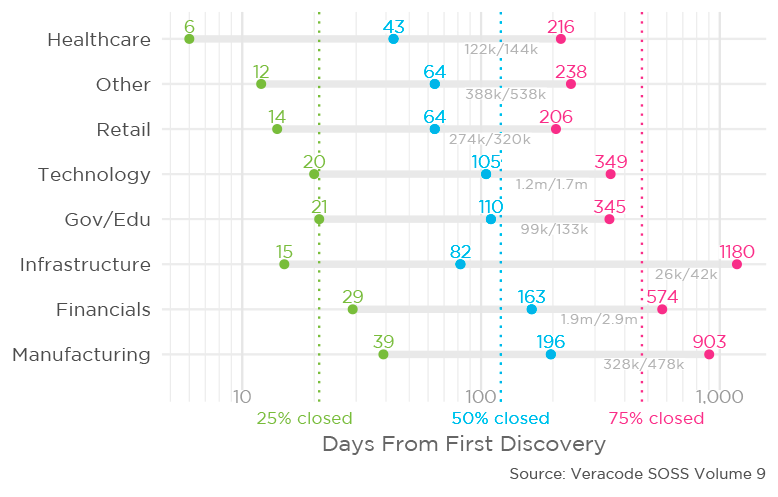

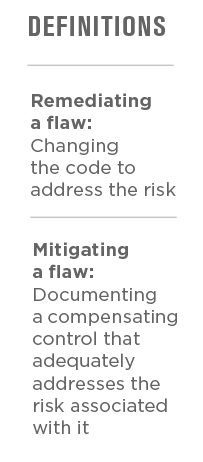

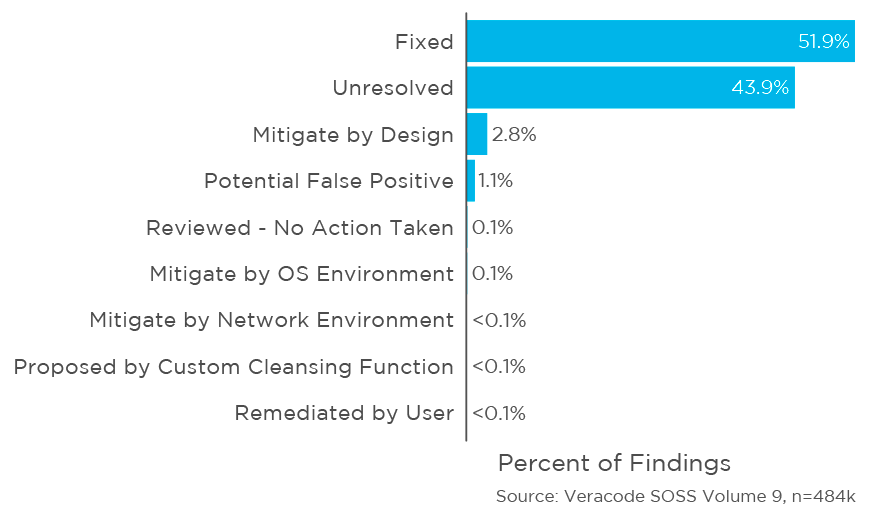

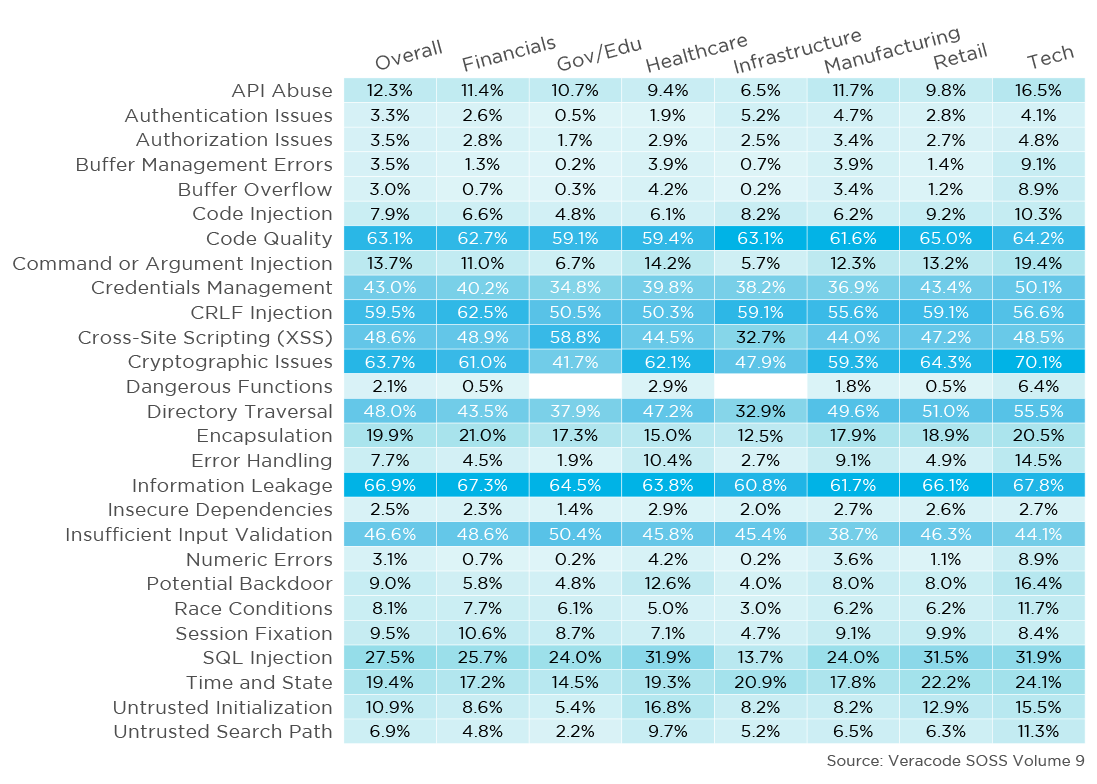

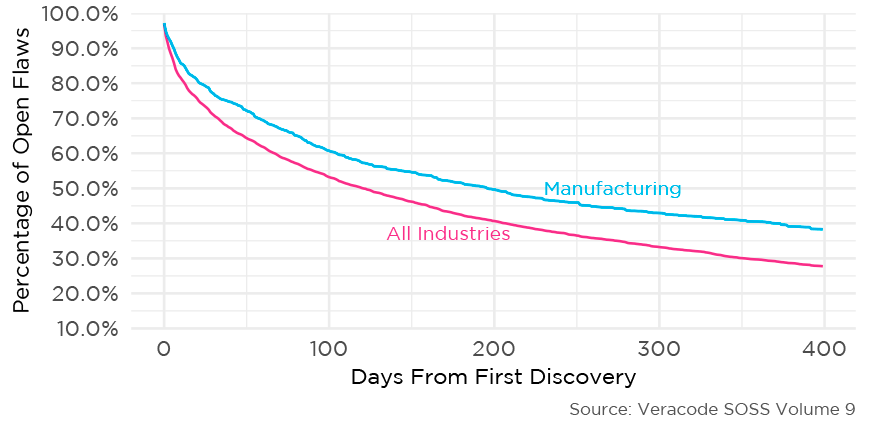

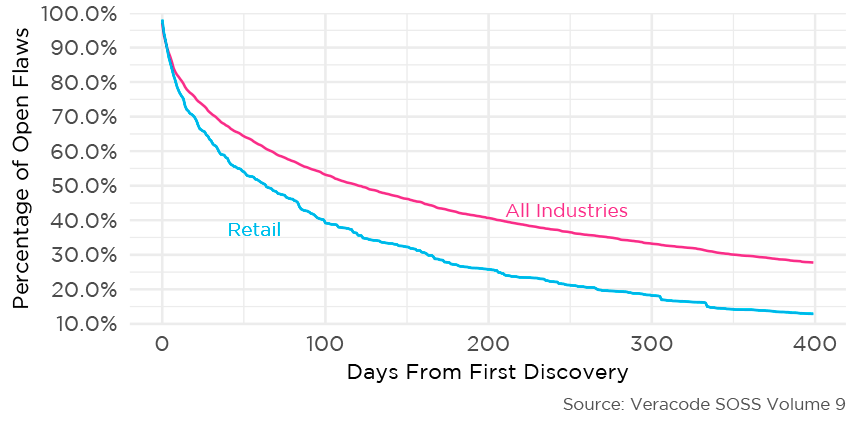

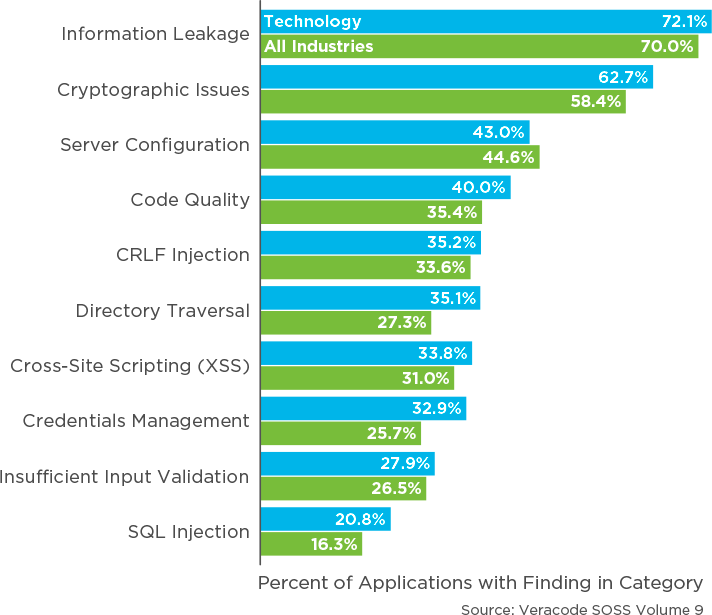

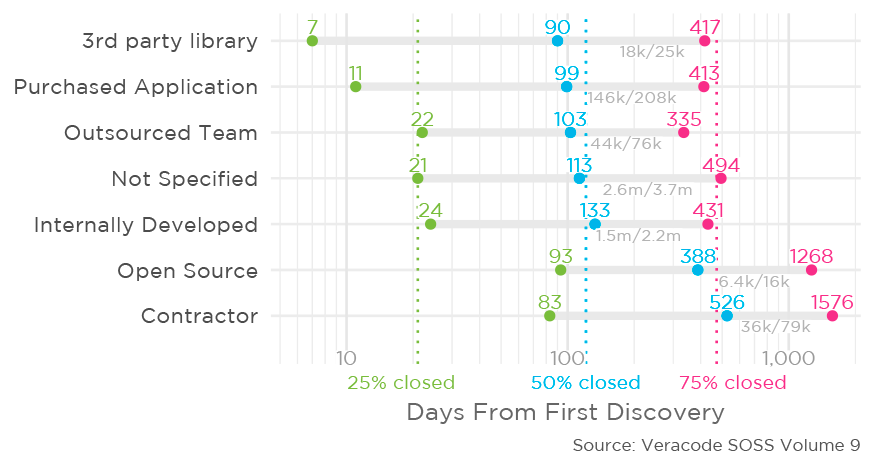

For a long time now, SOSS has provided a reliable yardstick for the most common vulnerabilities found in software, as well as how organizations are measuring up to security industry benchmarks throughout the software development lifecycle (SDLC). One thing we’ve always wanted to understand better, though, is how quickly these organizations are actually fixing flaws once they’ve been identified in application security scans.

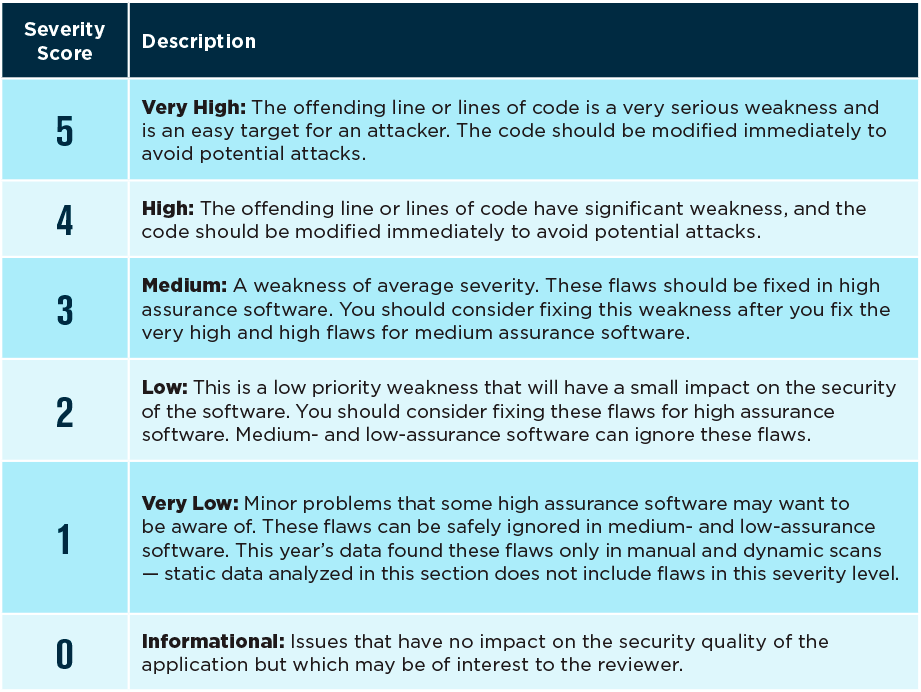

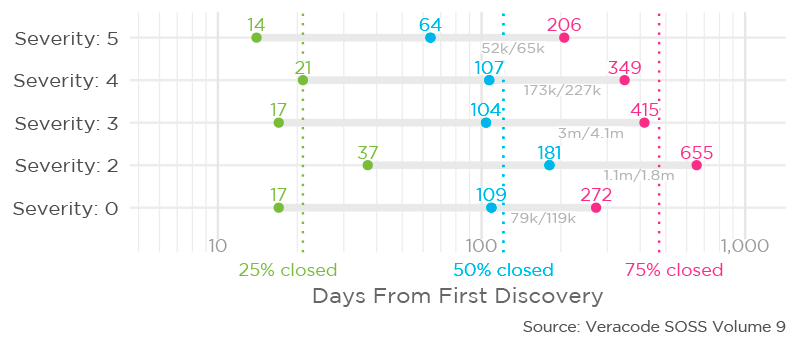

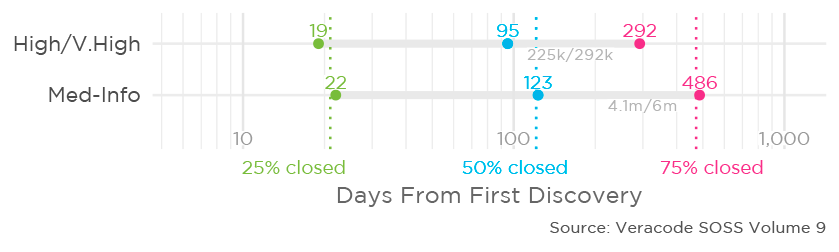

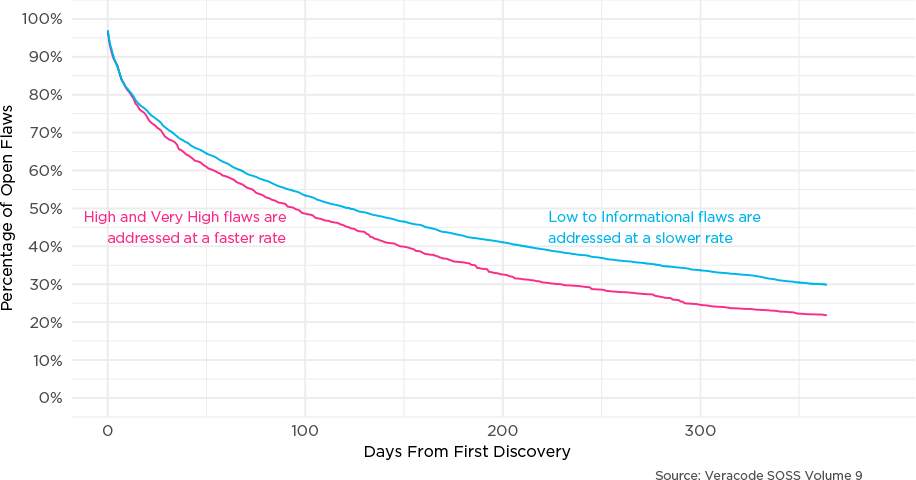

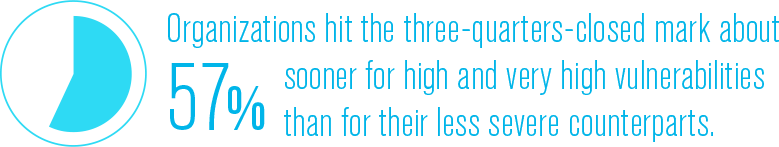

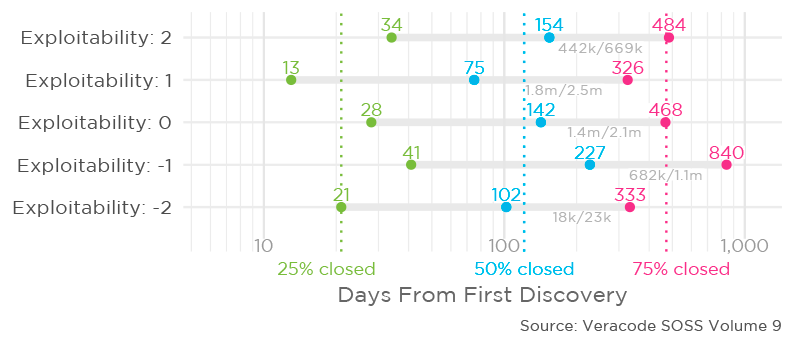

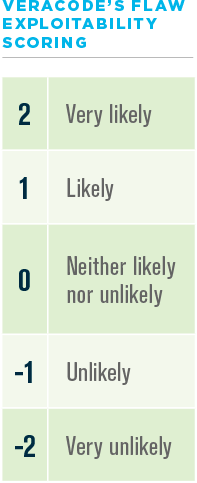

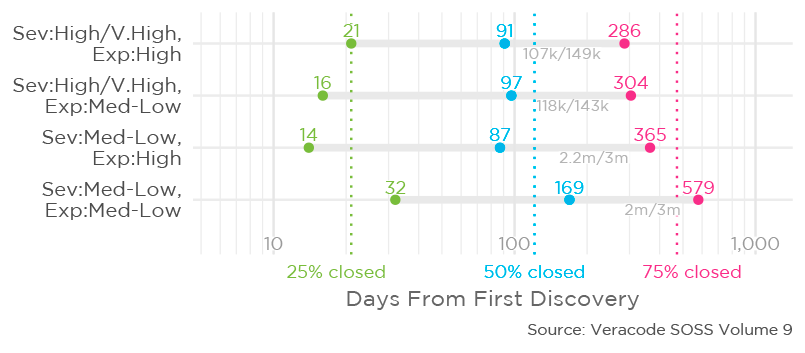

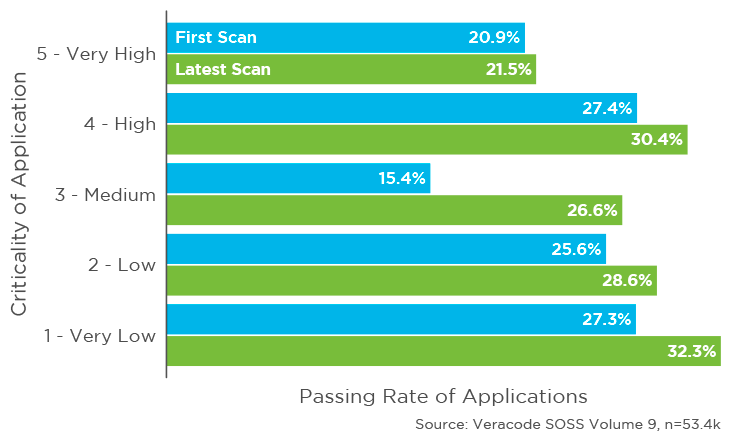

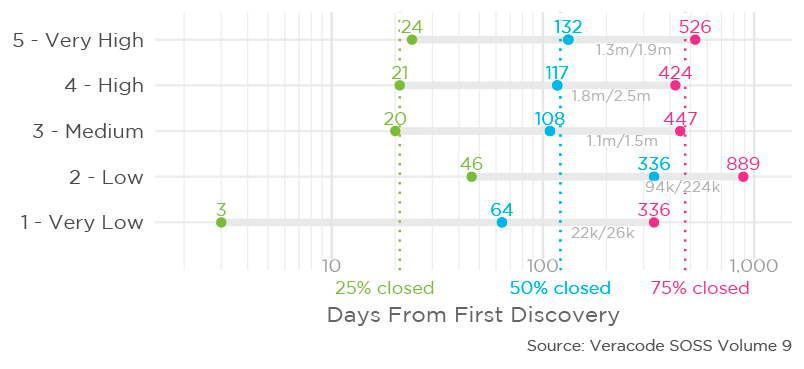

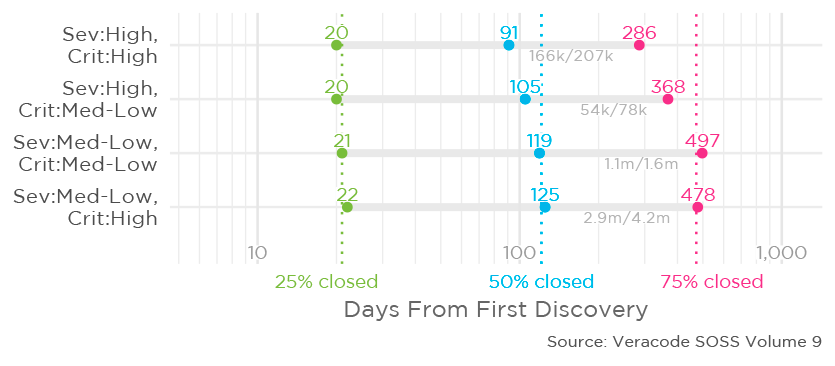

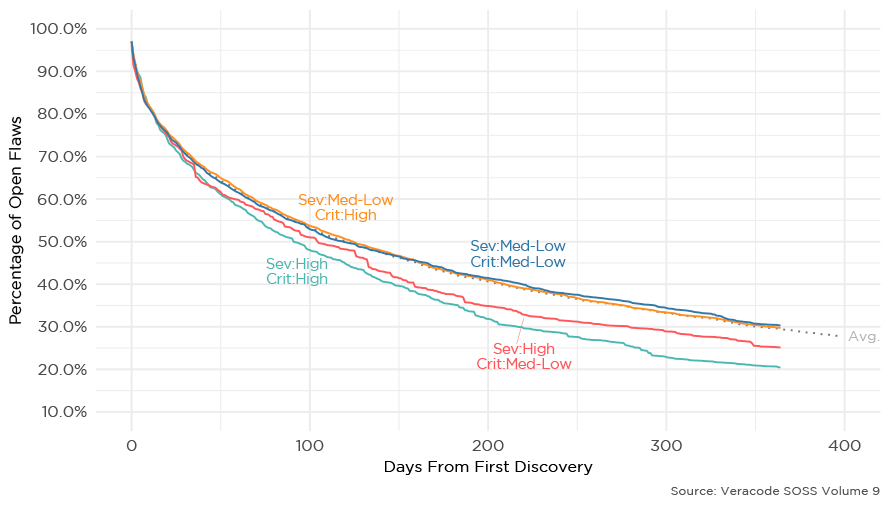

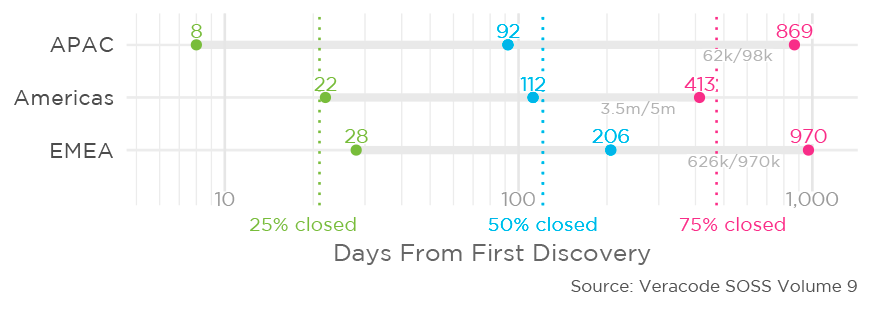

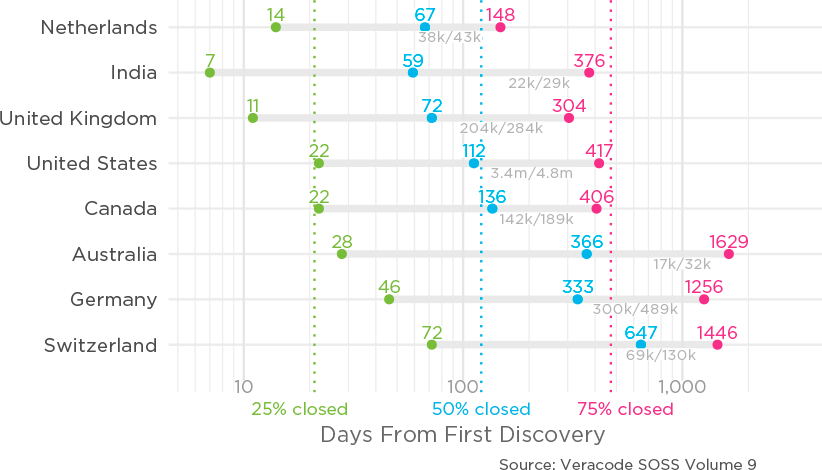

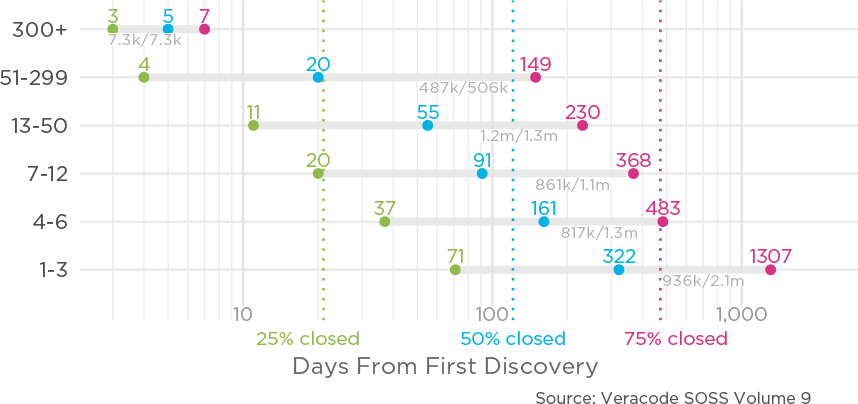

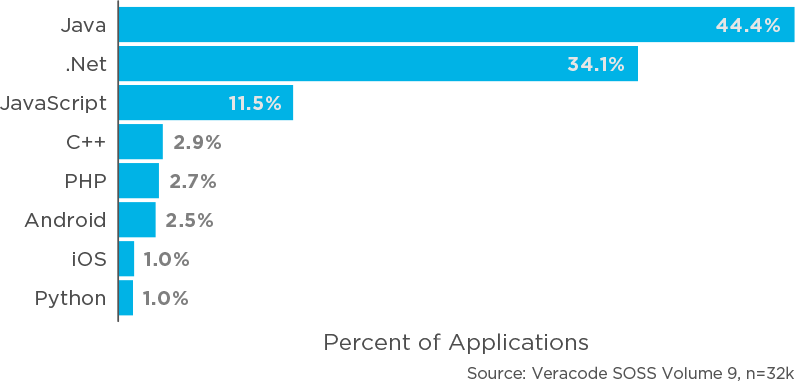

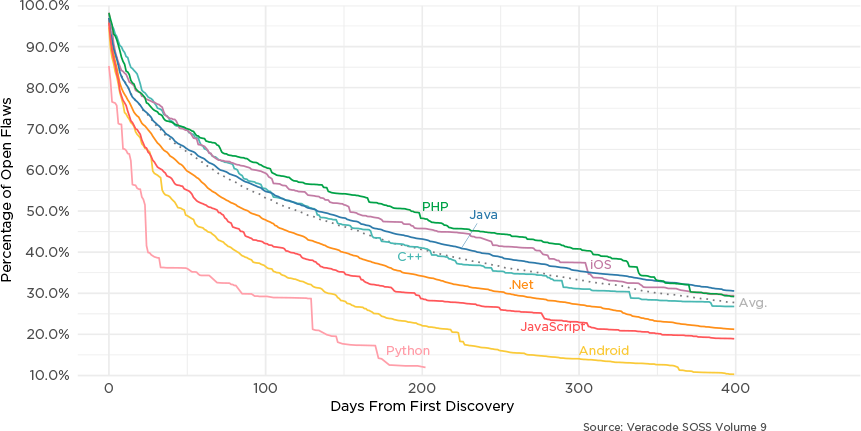

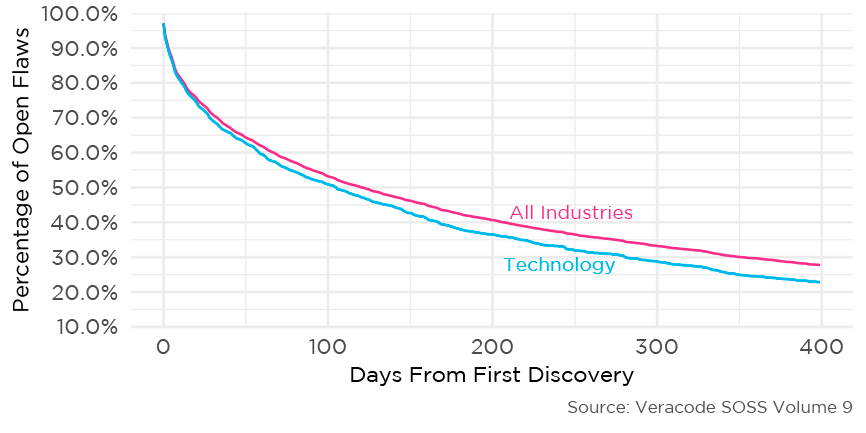

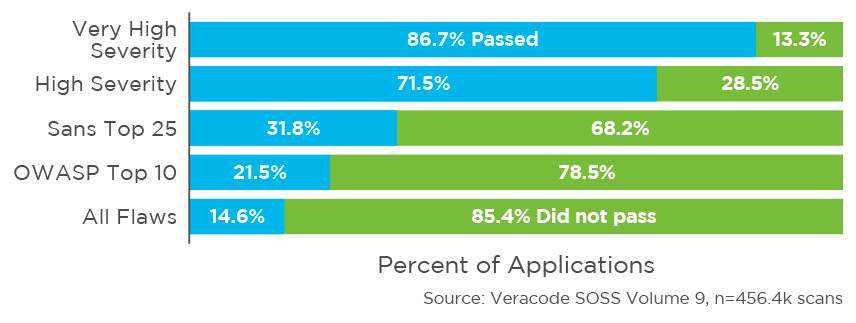

This year, we turned our data analysis up a notch by working with the data scientists at Cyentia Institute, so that we could gain better visibility into the factors that go into fixing flaws. Readers will find valuable insight on how factors like flaw severity, business criticality of applications, and exploitability of the flaws change the rate at which certain vulnerabilities are fixed.

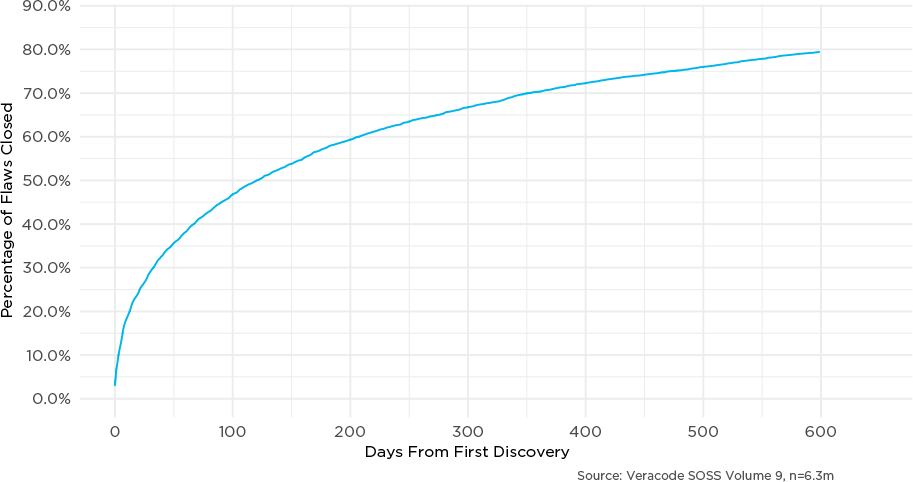

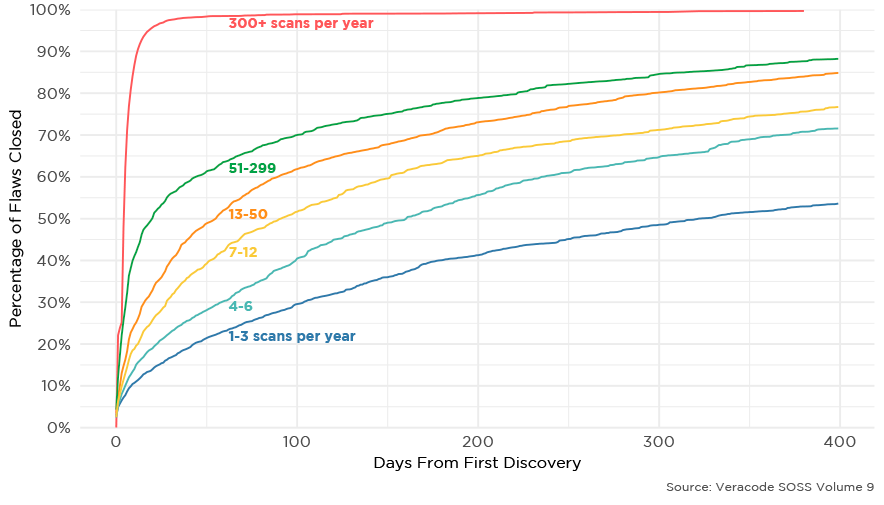

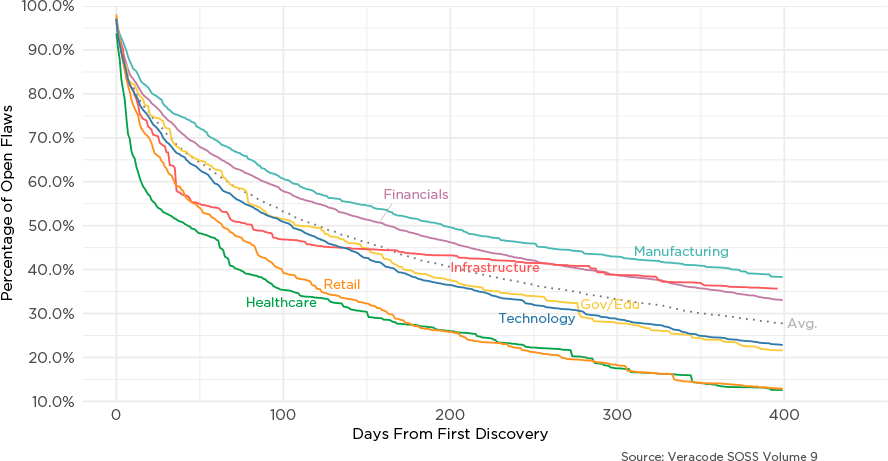

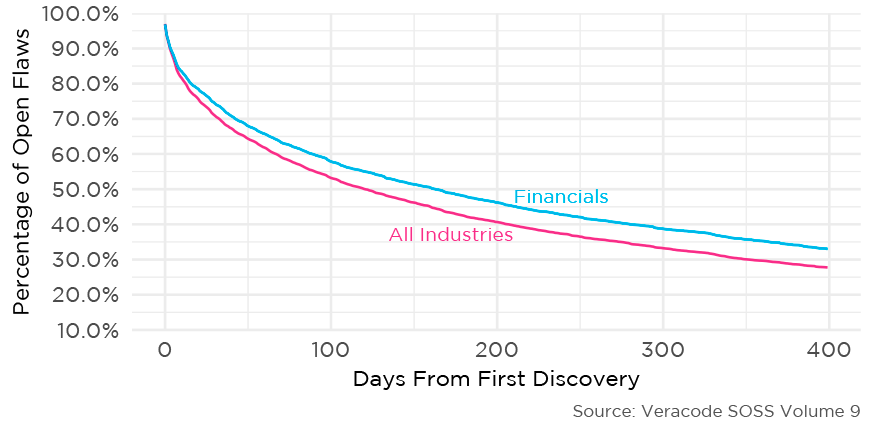

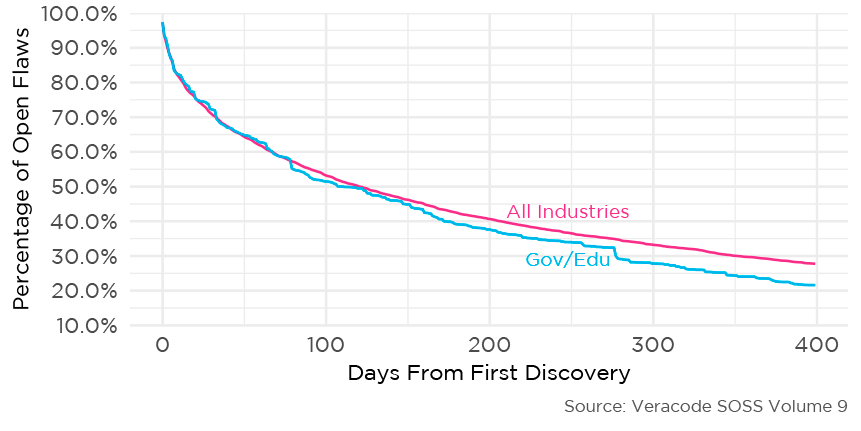

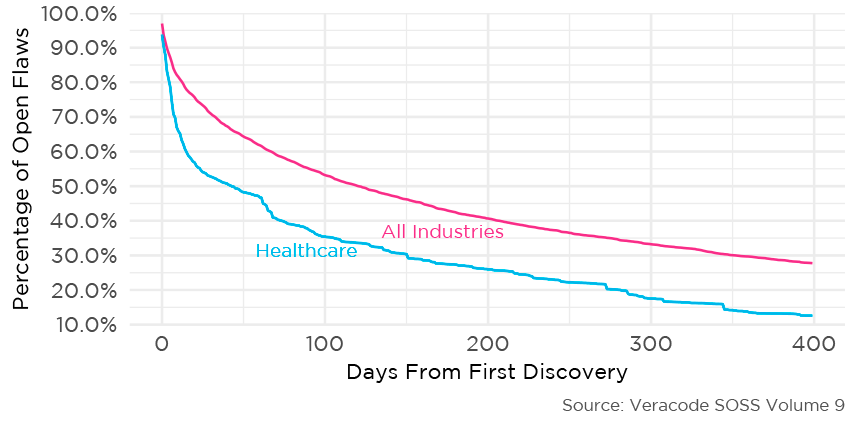

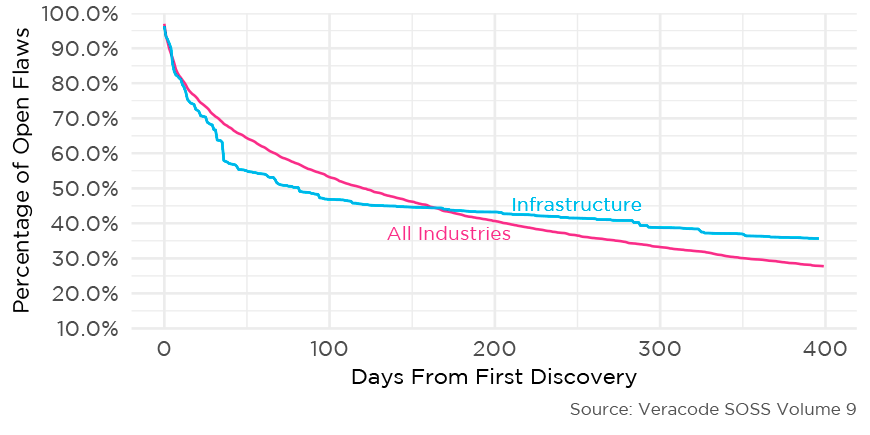

In many ways, our deeper look into the data confirmed what many industry veterans recognize intuitively: it takes time to fix security flaws. Contrary to what some security staffers might believe, developers simply can’t wave a magic wand over the portfolio to fix the majority of flaws in an instant, or even in a week. On top of that, there are other factors at play, including QA, product release cycles, and other exigencies of delivering software to the real world.

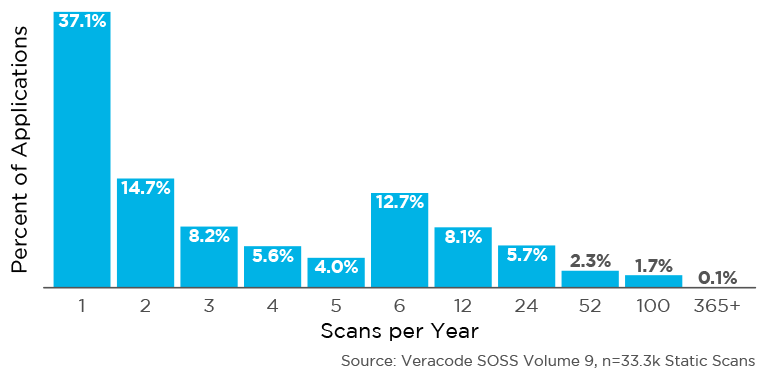

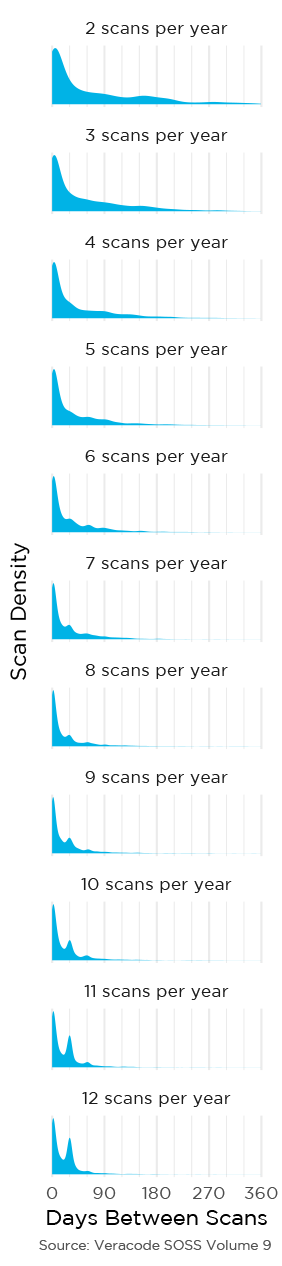

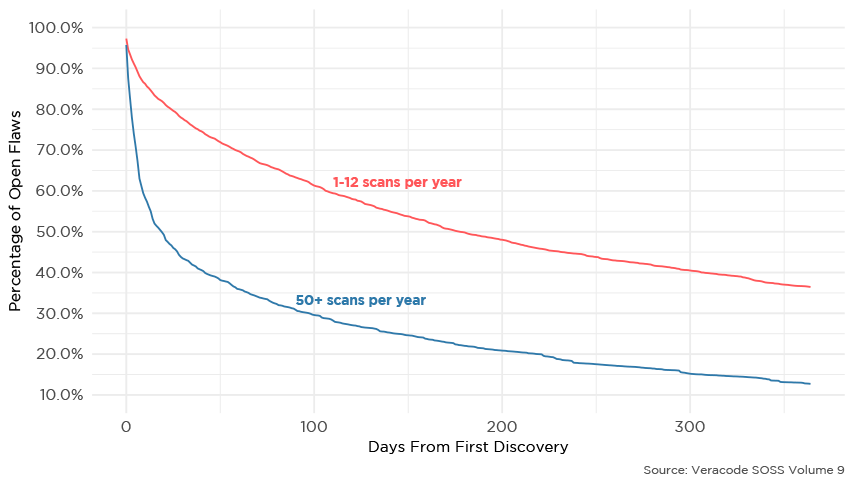

However, our data presents hopeful glimpses at potential prioritizations and software development methods that could help organizations reduce risk more quickly. At the top of that list is the DevSecOps mentality, which tends to incorporate more frequent security scans, incremental fixes, and faster rates of flaw closures into the SDLC. This year’s analysis shows a very strong correlation between high rates of security scanning and lower long-term application risks, which we believe presents a significant piece of evidence for the efficacy of DevSecOps.

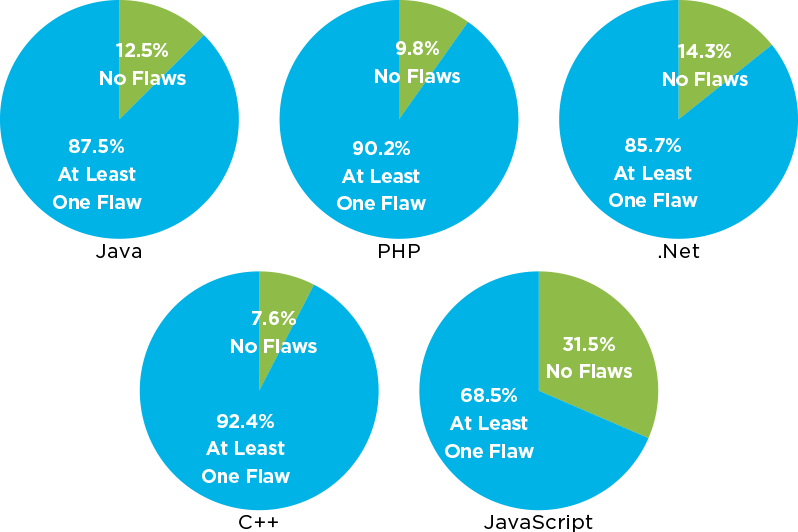

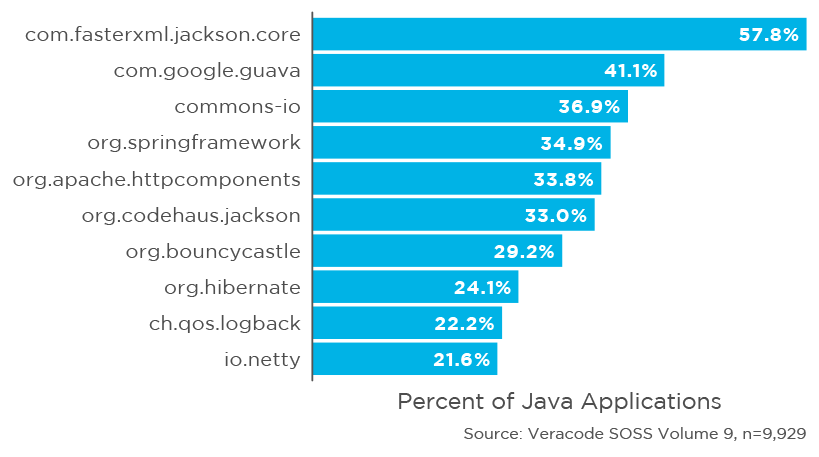

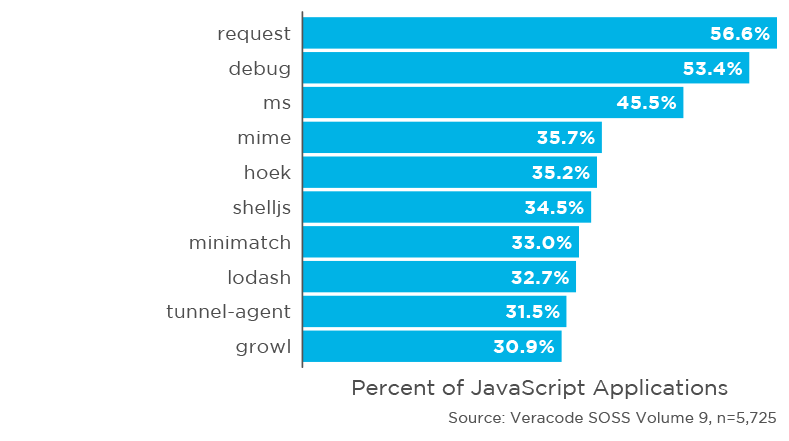

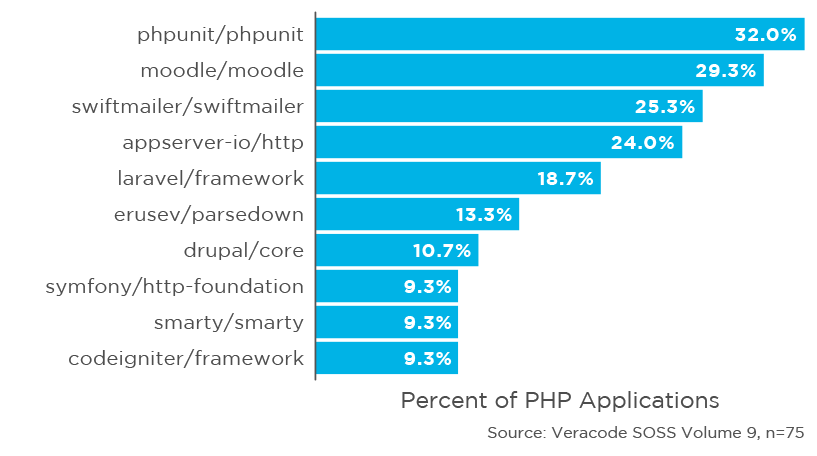

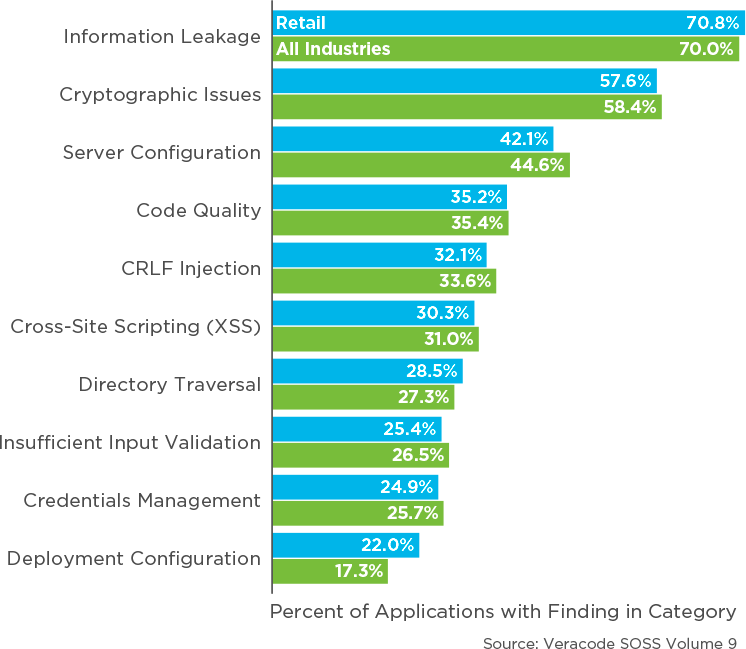

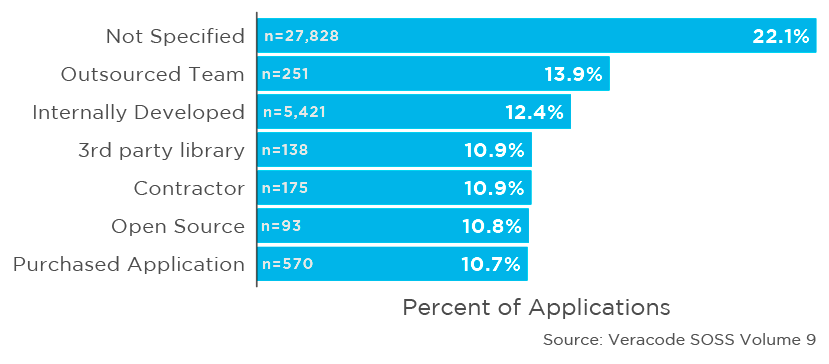

Alongside that, we also offer up loads of valuable information about industry performance, third-party component risks, and vulnerability trends. We believe that this body of work offers security practitioners and developers alike valuable food for thought as they seek to improve their application security stance in the coming year.

Sincerely, Chris Eng

LETTER FROM

Chris Eng

Vice President of

Research at Veracode